Web workers in JavaScript and when to use them

At bene : studio, we love knowledge sharing to help the community of professionals. To share our 10+ years of experience we have launched a knowledge hub on our blog with regular updates of tutorials, best practices, and open source solutions.

These materials come from our internal workshops with our team of developers and engineers.

Pardon the interruption, we have an important message!

We are looking to expand our team with talented developers. Check out our open positions and apply.

Introduction

JavaScript inherently is a single-threaded programming language. The ability to execute application logic in multiple threads was enabled with the introduction of Web Workers API.

Web Workers help in making use of multi-core processors efficiently, otherwise, JavaScript Application ends up running on the single main thread.

The primary purpose of Web Workers is to perform long-running tasks in the background and leave the main thread uninterrupted to keep the UI responsive. Below is a notorious example of how to block your main thread to mimic a long-running task:

If you put this code into the browser console in Developer Tools, you will see that the page becomes unresponsive which is due to the code blocking the main thread.

Enough of the primer. The main intention of this article is to find out how to transfer large data objects with the Worker thread from the Main thread without copying them. We are exploring this because possibly there are needs to execute long-running processing tasks on huge datasets, and we need to find an efficient way of processing such huge data without impacting the user experience.

Concurrency vs parallel processing

Concurrency and Parallel processing are often used interchangeably in multi-threaded software development, but there is a significant difference between them.

Concurrency is basically achieved by using asynchronous programming in JavaScript, which we often do. When two different tasks (read as functions) are executed with overlapping time intervals, then it is called concurrency. One task may be started before the other one is completed, and they share the CPU in a time slicing manner. For example, the setTimeout function helps in doing tasks in overlapping time periods.

Parallelism, on the other hand, is achieved when two different tasks(functions) are executed in parallel at the same time, with multiple processing cores.

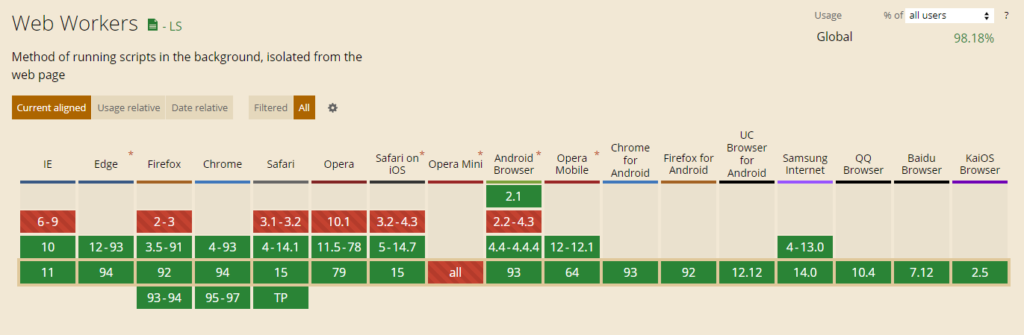

Supported browsers

The support of Web Workers in various browsers can be checked on Caniuse.com.

It has a healthy global coverage of 98%.

The support of WebWorker API can be checked using the below snippet:

A quick example

Main script:

worker.js:

Shared Worker vs Dedicated Worker

Scripts running from multiple windows can access the same Worker script using SharedWorker API.

They work by creating an instance of MessagePort through SharedWorker.port property to send and receive messages. The support for Shared Web Worker is not as good as Dedicated Web workers with just 35% of coverage.

The scripts sharing the web worker script should belong to the same site/origin (protocol, host and port).

Regardless, below is an example snippet demonstrating the use of shared workers for sending and receiving messages:

On the Main scripts:

On the worker script:

Service Worker vs Web Worker

The scope of this document is not related to Service Worker. It’s better to understand the basic difference between the two types of Workers.

Service workers are usually used to make network connections to external data sources like external APIs or Database connections which can be run in the background. It is used to manage Push Notifications, Periodic Sync, etc., and sometimes involves caching techniques for Progressive Web Applications (PWA) for offline content.

Web Workers are primarily used for CPU-intensive tasks to be run in the background without any network connectivity required to work on the tasks.

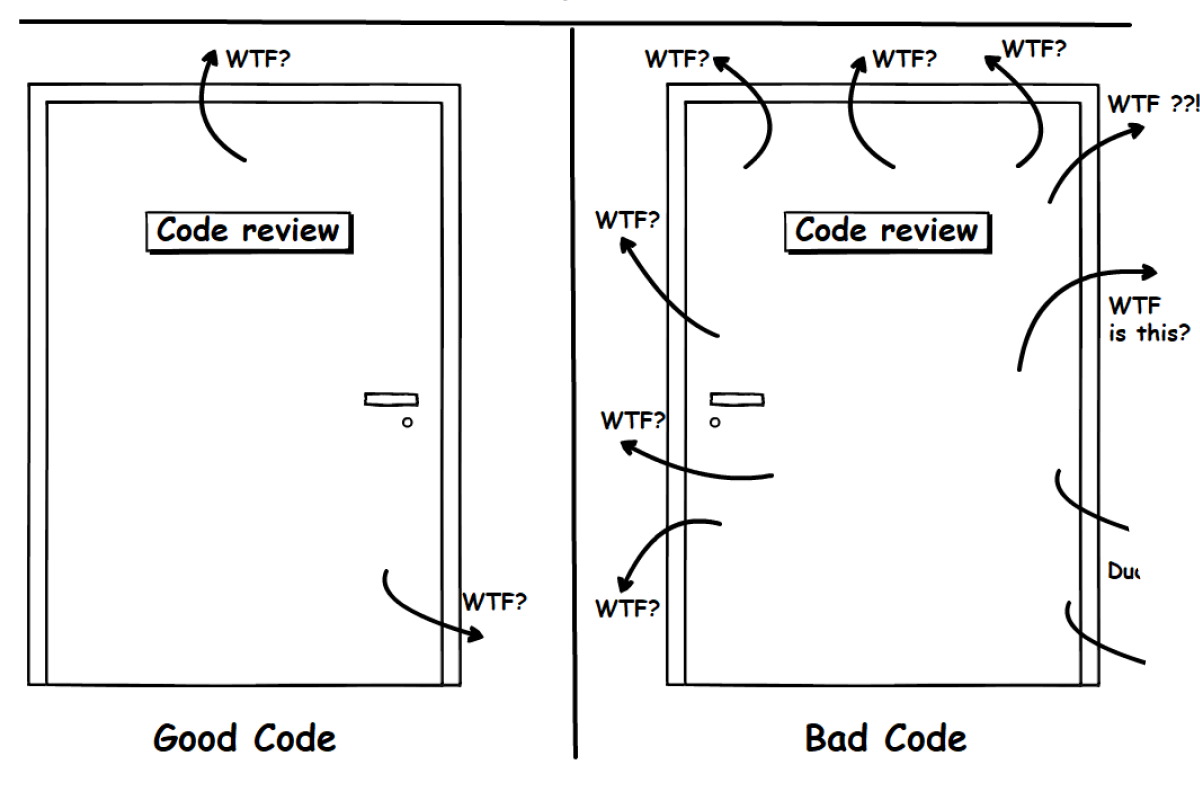

Structured cloning algorithm

Under the hood of the postMessage function, Javascript uses an algorithm to recursively copy the object that is being transferred to the Worker thread. This algorithm will not clone objects like functions or DOM nodes, for which it throws exceptions. If you want to send functions to worker threads, please refer to the Comlink library which will be introduced later in this document. This algorithm is already at its optimum best, and we should not try to introduce an alternate algorithm that will try to be more performant in cloning the data objects being sent to Worker threads.

Data transfer solutions

When working with large data objects, while it may be an unusual requirement, it’s desirable to handle the data without copying/cloning it between Main thread and Worker thread. For this scenario, WebWorker APIs also support transferring (zero-copy) of the messages from Main thread (and vice versa) without cloning the data. These are called Transferable objects.

Transferable objects

When working with transferable objects, you can assume it to be a reference to the data being passed on from one thread to the other without making a copy of the data object. This will help in improving the performance during the transfer of messages from one thread to the other. Once the object is transferred to the worker, it cannot be accessed anymore from the main thread and vice versa.

Shared Array Buffer

SharedArrayBuffer is used to share the same data block in two different contexts like Main thread and Worker thread with side effects. The changes inside one context will become visible to the other, and once the SharedArrayBuffer object is transferred via WebWorker API, both the threads will refer to the same object in memory.

SharedArrayBuffer is a fixed-length array buffer and can be used to create different data views like Typed Arrays.

Atomics

Since SharedArrayBuffers use a common memory block, it may take a while to see the changes made within one context, in the other context. And also, to avoid race conditions in multi-threaded applications, Atomic operations are used to ensure data consistency across different threads. The Atomics object provides atomic operations as static methods. They are used with SharedArrayBuffer and ArrayBuffer objects.

Security Context and Cross-Origin Isolation

SharedArrayBuffer and Atomics are not the approaches we would like to choose for now, since we can achieve what we want by using Transferable objects which are available to only one context at a time and not shared. However, the below security requirement can make us decide against using them.

SharedArrayBuffers were disabled in the year 2018 due to an attack made by some nerds exploiting security loopholes in SharedArrayBuffer. This attack was called Spectre.

SharedArrayBuffer is now re-enabled on a few browsers, and the support coverage is roughly ~70% which can be checked here.

Now, SharedArrayBuffer has a security requirement of Cross Origin Isolation being enabled before we can use it. This can be enabled by setting the following headers and values:

Cross-Origin-Opener-Policy: same-origin

Cross-Origin-Embedder-Policy: require-corp

To verify if cross-origin isolation is enabled in the browser, we can use the following snippet:

Transferable objects (Contd..)

Enough of all the theories we have been discussing, and let’s get our hands dirty by experimenting with the data transfer between the main thread and the worker thread.

Below is the link to the Github repository for our proof of concept, of using web workers with and without Transferable objects.

https://github.com/benestudio/iws-web-worker-react

We have a barebone react application for exporting a huge json data (~291 MB in size) as a csv file.

The sample data is compiled from collecting Github users API, and duplicated to form a large array of size 342000. This static backend data is served by json.js file, which is a simple express node server serving the large json data through GET REST API: /data2.

The React front-end App consists of two components: App.js and WorkerInfo.js.

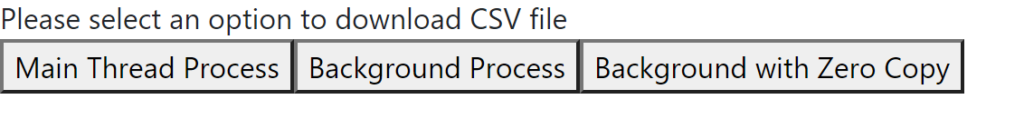

The react frontend app has three buttons, one each for the following three modes:

- Mode-1: Downloading JSON as CSV without using WebWorker API

Button named: Main Thread Process

- Mode-2: Downloading JSON as CSV using WebWorker API with full copy (Structured Cloning)

Button named: Background Process

- Mode-3: Downloading JSON as CSV using WebWorker API with zero copy (Transferable Objects)

Button named: Background with Zero Copy

Below is the Button Group whose “id” prop defines which mode of operation is being triggered:

Below is the state variable used to hold the button id to trigger the download of CSV file on state change during user interaction with these buttons:

The WorkerInfo component provides the text information about the operation that was requested by the user with time taken to perform the particular task. The mode state is passed on to the WorkerInfo component as its input prop – workerMode value, so the operation is performed only when the state value changes, which means the user cannot click the same button twice consecutively.

The code below demonstrates the usage of this workerMode prop passed on from the App component:

The corresponding operations based on workerMode are triggered using this useEffect hook, since the interaction with the worker thread from the main thread is considered as a side effect.

We have a couple of animated elements below the button to demonstrate that UI is not responsive whenever the process is executed in the Main thread.

The first UI element is a Bootstrap CSS spinner which is not affected by Main thread execution.

The second UI element (with green background and a red box) is a pure Javascript-based animation to move a box within its parent.

You may notice that during CPU-intensive operation of loading the huge JSON data, the animation gets blocked on the Main thread, and resumes once the data processing is complete.

Apart from the less fancy animation, let’s focus on the console logs with timestamps. To distinguish between the two threads, the logs from the worker thread are prefixed with “worker::”, and the logs from the main thread are prefixed with “main::”.

Preparing for Buffer Transfer:

The JSON Objects cannot be directly transferred between the main thread and the worker thread. We need to convert them into one of many Transferable objects like ArrayBuffer. In our example, we are exporting the data into a CSV file. We use the “d3” library to format JSON data into a CSV formatted string.

The below function is used to convert the array of Javascript objects into CSV formatted string:

Next, we convert the CSV string into a Blob (for file operations), which is then converted into an ArrayBuffer during transfer between Worker and Main threads.

The function below is used to convert the CSV data into a Blob binary data and then to ArrayBuffer transferable object:

Transfer of CSV Blob content with full copy

The first test is to check the performance of using Web Worker with a full copy of CSV Blob data.

This is triggered by the second button of our sample application. Below code is used for CSV file conversion from Worker thread and transfer to Main thread using a full copy transfer:

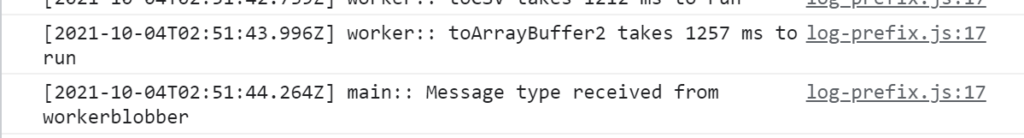

Please note the below two console log statements, when the transfer is happening from worker thread to main thread.

It takes 268ms to transfer the ArrayBuffer of the CSV Blob from the worker thread to the main thread using Full Structured Cloning of data.

Below is the result on UI with full copy transfer:

Transfer of CSV Blob content with zero copy

In contrast, the below log statements show better performance (in terms of ms), when we transfer the CSV Blob data through Transferable Objects. This is triggered by the third button of our sample application.

Below code is used for CSV file conversion from Worker thread and transfer to Main thread using a ZERO copy transfer:

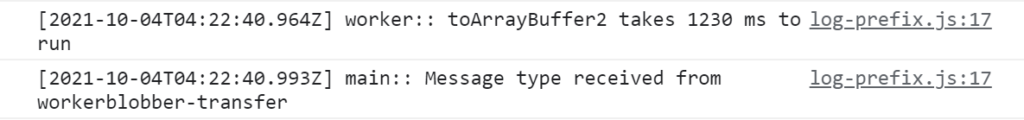

Please note the below two console log statements, when the transfer is happening from worker thread to main thread.

It takes 29ms to transfer the ArrayBuffer of CSV Blob, by using transferable object overload of the postMessage function.

Below is the result on UI with ZERO copy transfer:

By this comparison, it is evident that the performance of a Transferable object is better than full structured cloning of data.

But it is not clearly visible at the front end to the human eye, because the performance difference is in the order of milliseconds. The performance difference of such a timescale can make a significant difference when it is applied at the server-side for a high traffic web application based on a Javascript stack like NodeJS.

Moreover, we are also facing the UI thread block in the main thread using zero-copy Transferable objects because there is considerable time involved in converting the transferable ArrayBuffer into a downloadable Blob file. The time taken for Structured Cloning Algorithm to do a full copy of the Blob object is not worse when compared to zero copy transfer of transferable object + the time taken to convert transferable binary data to blob object.

The Takeaway

So the takeaway from the above experimentation is that Zero Copy transferable objects are better utilised when dealing with raw binary data for transferring between Main thread and Worker Thread.

If we want to transfer JSON objects to and from Main Thread-Worker Thread, then we are better off with default copying of Structured Cloning Algorithm, which is handled natively by Javascript/Web Browsers.

Related libraries

While you are at the end of the article, you can take the opportunity to explore third-party libraries which can help simplify using web workers a lot.

- Comlink

(57k weekly downloads as of Sep-2021)

- React use-worker

(39k weekly downloads as of Sep-2021))

Do you have questions?

Please send them to partner@benestudio.co, and we are happy to set up a talk with our engineers.

Are you looking for a partner for your next project? Check out our services page to see what we do, and let’s set up a free consultation.

Join our engineering team, we are hiring!